Turn GPUs into Revenue

The Network Automation & Multi-Tenancy Platform

for AI Cloud Operators

Trusted by

GPUs ≠ AI Cloud

Network automation and multi-tenancy, purpose-built for AI, is the critical foundation for maximizing the ROI of GPU infrastructure.

It empowers you with cloud-service-provider capabilities, enabling dynamic and secure sharing of hardware resources across multiple tenants and workloads.

Building In-House Network Automation Is a Trap

Building in-house automation burns years of time and money, and 9 out of 10 companies regret it.

The result is fragile, expensive to maintain, and pulls focus away from the AI business. Teams get stuck hiring specialists, chasing bugs, and falling behind as new architectures emerge—essentially becoming a network automation company instead of a GPU cloud operator.

Traditional Network Controllers Are Structurally Unfit for AI

Traditional SDN Controllers and Fabric Managers were built for legacy enterprise datacenters, not AI.

They don’t support NVIDIA switches or DPUs, lack integration with InfiniBand and NVLink, and miss cloud-provider essentials like elastic IPs and load balancers. Without these, they simply can’t meet the automation and multi-tenancy demands of AI infrastructure.

Soft Isolation Is Not Enough For True Multi-Tenancy

VMs, containers, and other software-based methods provide soft isolation, but on their own they fall short of cloud-provider-grade security.

Software vulnerabilities or container escapes can breach these barriers, putting tenants and workloads at risk. That’s why GPU cloud providers rely on hard isolation enforced at the networking hardware level—across switches, DPUs, and fabrics—to guarantee secure, reliable multi-tenancy at AI scale.

“All-in-One” Falls Short

“All-in-One” Falls Short

“All-in-one” platforms claim to do everything — networking, compute, platforms, NIMs, applications, billing, and more. But when a tool tries to cover everything, it specializes in nothing.

AI networking is too complex and too critical to be handled at a surface level. Without a cloud-provider-grade AI networking platform, operators face delays, outages, and compliance risks that lead to churn and reputational damage. Your networking foundation should not come bundled with your compute or AIOps stack. To stay competitive, you need the freedom to mix and match vendors — and continuously choose the most optimal combination of infrastructure and revenue channels.

Netris Your AI Network

The purpose-built platform for network automation and multi-tenancy. Trusted by AI cloud operators worldwide.

-

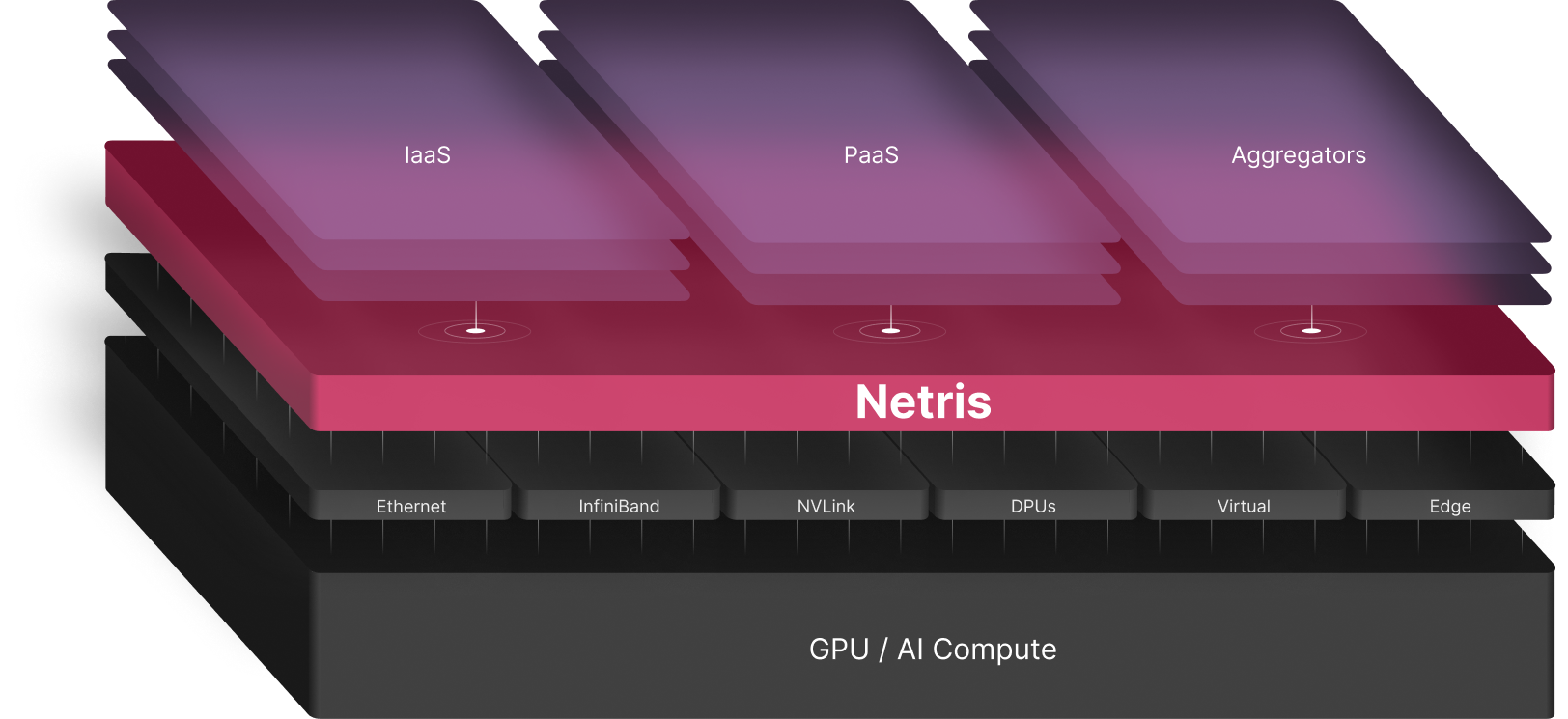

AI Networking Foundation

Netris is the foundational platform for AI infrastructure operators that delivers the cloud-provider-grade network automation, abstraction, and multi-tenancy across the entire AI networking stack: Ethernet, InfiniBand, NVLink, DPUs, Virtual, and Edge Networking. Netris absorbs the complexity and networking nuances of various reference architectures, accelerating the launch and operation of your AI cloud.

-

Essential platform for GPU revenue generation

-

Automation

Netris delivers the missing automation layer for Ethernet and DPU fabrics, with best practices built into its auto-configuration algorithms. Engineers define the end state, and Netris configures VRFs, VXLANs, BGP, and everything else, all in under two minutes with closed-loop assurance. This speeds day-0/1 deployment and gives operators confidence and agility for day-2 maintenance

-

Accelerate AI cloud launch and eliminate human errors

-

Abstraction

Netris provides a cloud-grade network abstraction layer via web console and REST API, which is essential for AI cloud services. It enables AWS-style constructs like VPCs, peering, elastic IPs, load Balancers, and more, while Netris algorithms translate them into precise configurations across Ethernet, InfiniBand, NVLink, DPUs, Virtual and Edge Networking delivering simplicity over complex, multi-fabric infrastructure.

-

Deliver cloud-provider–grade networking services

-

Multi-Tenancy

Netris enables true multi-tenancy by providing hardware-level isolation across bare metal, VM, and container workloads. This hard isolation—now the industry standard for data sovereignty and compliance—is fully enforced on switches, DPUs, and fabrics. Operators simply define the endpoints, and Netris algorithms automatically generate and apply the correct configurations across all network elements.

-

Deliver dynamic and secure multi-tenancy

The Core Networking Layer for AI Clouds

Equalize AI Networking

Netris acts as an equalizer of networking: beneath the surface, it absorbs the complexity of each reference architecture, while on top it delivers a consistent, standardized experience. This enables cloud providers to adopt new RAs with confidence, without burning engineering cycles on constant adaptation.

Integrate Ecosystem Platforms

Netris serves as the baseboard for AI networking, enabling operators to maximize GPU utilization by mixing and matching IaaS, PaaS, solutions and GPU aggregator marketplace service platforms without re-engineering the network. This opens new revenue channels and positions operators to maximize returns in the AI economy.

Future-Proof Without Lock-In

Netris frees operators from being tied to a single hardware, architecture, compute orchestration software, or aggregator channel. With Netris, operators can continuously leverage best-of-breed components and establish predictable costs to achieve long-term profitability.

Turn Infrastructure

into Revenue

Start generating revenue sooner to avoid the delay associated with in-house automation development.

Win more business with cloud provider–grade services that competitors can’t match.

Expand revenue streams through ecosystem of GPU aggregators, marketplaces, and platforms.

Generate more revenue per node and per GPU with granular, dynamic, and hard isolation.

Minimize customer waiting time and GPU waste with immediate and error-less network reconfigurations.

Reduce CapEx by reusing hardware resources across multiple tenants and workloads.

Lower CapEx by mixing and matching best-of-breed hardware and software at the right price point — without being locked in.

Decrease OpEx by eliminating unnecessary costs associated with development and maintenance of in-house network automation.

Production-Proven

Netris NAAM is essential infrastructure for any GPU cluster at AI factory scale. Our collaboration with Netris supports the operational scalability and flexibility required for next-generation AI Factory infrastructure.

Neo Yao

CEO, Visionbay

Netris simplifies network automation and makes hyper-scaler-level functionality accessible.

Darrick Horton

CEO, TensorWave

Netris is the networking foundation that makes our AI Factory run and makes our Token Factory possible.

Saeed Otufat-Shamsi

Director of Engineering & AI Factory Lead, TELUS

With the Netris platform, organizations can accelerate time to production, streamline network operations, and build a foundation for autonomous, agent-driven AI infrastructure.

Yael Asseraf Shenhav

VP SuperNIC and DPU Products & Business, NVIDIA

Without Netris, provisioning a tenant would take days or weeks. With Netris, it’s seconds.

Dominic Romeo

Director of Technology Service, Scott Data

Netris provides the network-level abstraction and segmentation that makes secure, cloud-scale multi-tenancy possible – including for HIPAA-regulated workloads.

Sabur Mian

Founder & CEO, STN

Netris integration with NVIDIA DSX Air provides a powerful environment to architect and validate the entire networking stack in parallel with hardware delivery, drastically accelerating time to value.

Amit Katz

VP Networking Products, NVIDIA